Get the complete picture of your hardware, server, client, virtual, cloud and software assets from purchase to disposal.

Improve efficiency

Save time and reduce resources dedicated to managing your assets.

Ivanti Neurons for ITAM

Ivanti Neurons for ITAM consolidates your IT asset data and lets you track, configure, optimize and strategically manage your assets through their full lifecycle. The solution's configurable design helps you define and follow your own workflows or implement out-of-the-box processes.

Get the complete picture of your hardware, server, client, virtual, cloud and software assets from purchase to disposal.

Save time and reduce resources dedicated to managing your assets.

Reduce downtime, increase productivity and gain an accurate picture of your IT environment for better decision making.

Avoid financial risks and security threats, reduce theft and loss, identify machines at risk and ensure your assets are used appropriately.

Real-time discovery, automated reconciliation and normalization in minutes to pre-populate your asset repository.

The asset repository integrates with your service management CMDB for up-to-date asset information, easy request management and improved service delivery.

Use the mobile app to manage your IT assets while remote or on the move. Search for assets, update fields, check for incidents and apply automated quick actions.

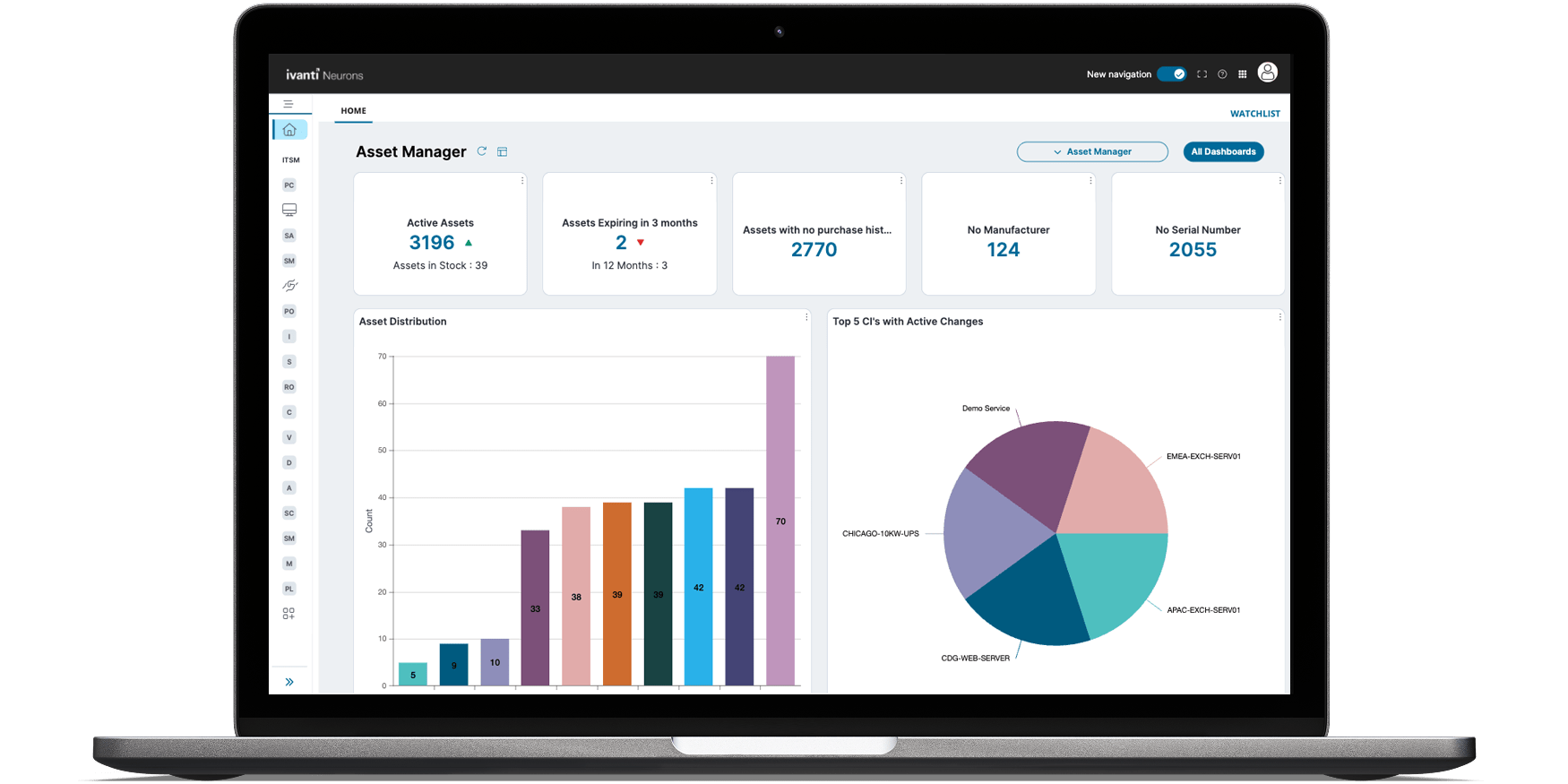

With Ivanti Neurons for ITAM, you gain comprehensive visibility of hardware and software assets, enabling you to optimize costs and improve efficiencies across the full asset management lifecycle.

This little guy monitors the health of a device’s battery so you know how it’s running, when it needs maintenance, and even when it needs to be replaced.

Features and capabilities

Make the most of your IT investments – anytime, from anywhere.

Consistent asset management from procurement to purchase order and invoicing, receipt, deployment and disposal.

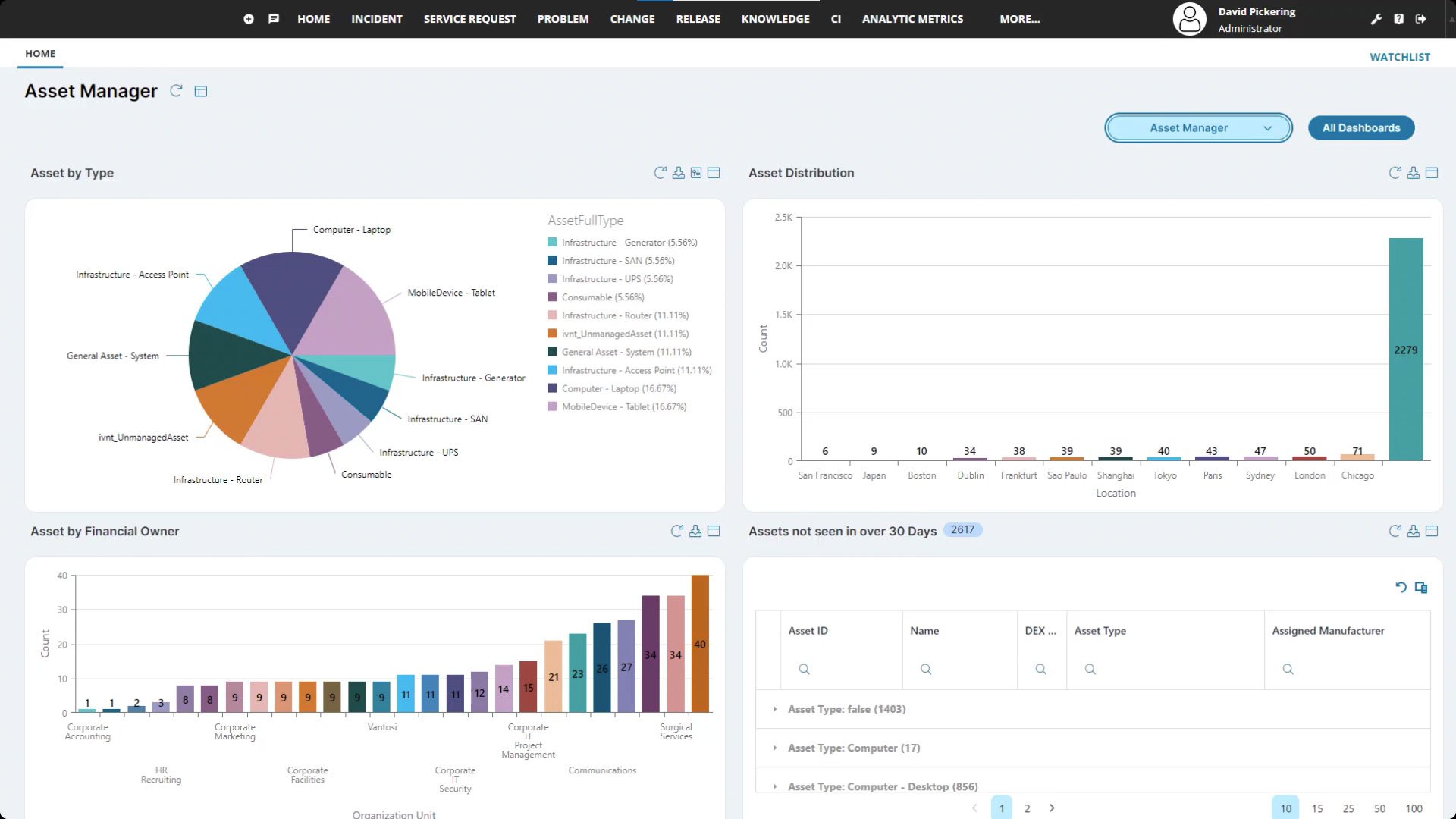

Keep track of asset information, including identifying data, lifecycle status, stock, location and warranty information.

Visibility into purchased and assigned assets, current stock levels or active orders to increase speed to provision while reducing service desk calls.

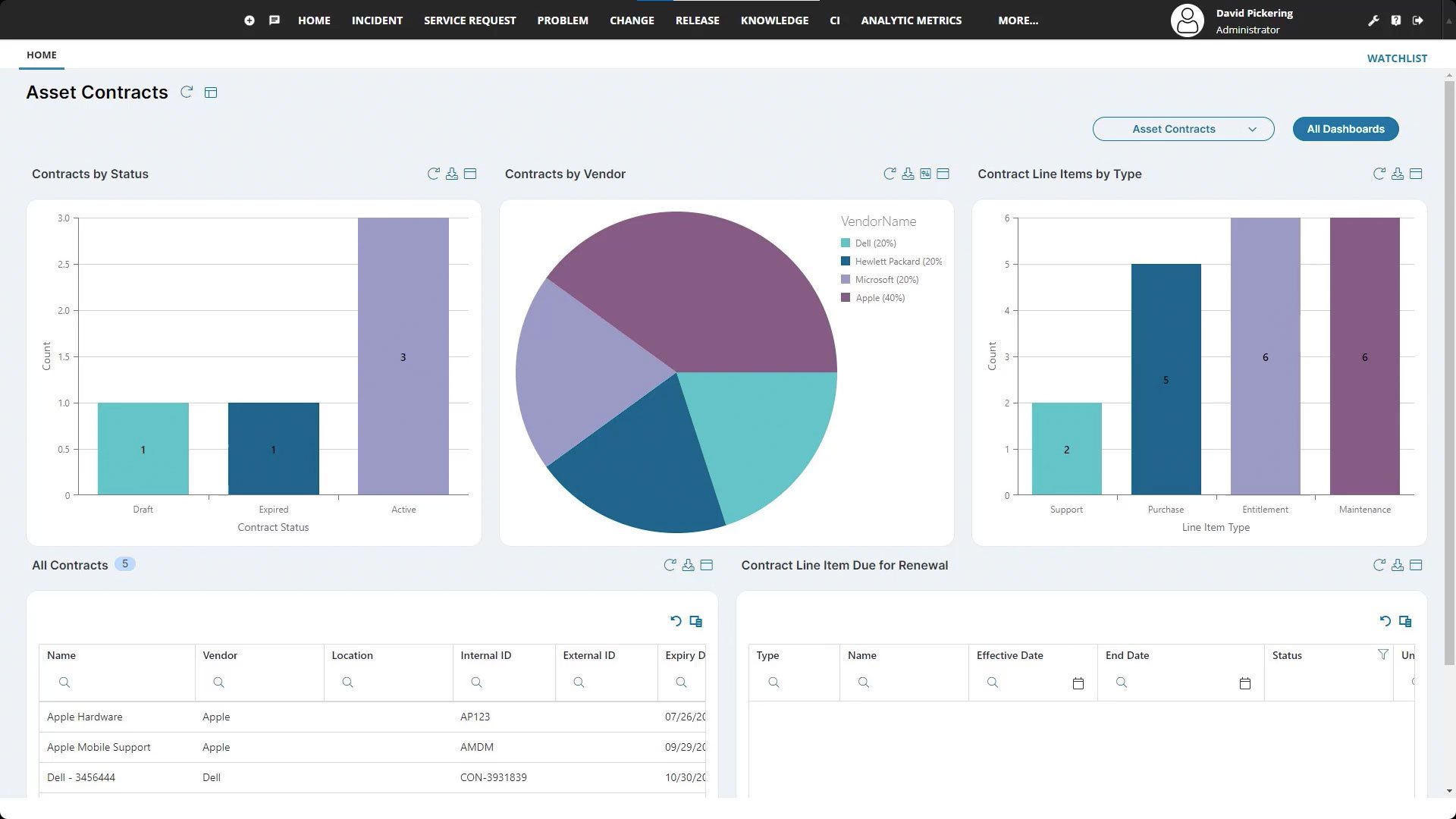

Report on IT spend and calculate and track asset age and value. View and manage contracts effectively and make informed decisions for contract negotiations.

Speed up data receival and retrieval: scan assets to look up or modify information or scan multiple assets as part of asset tracking.

Store vendor information and aggregate performance in vendor scorecards to ensure you are managing strategic vendors effectively.

Hosted on Ivanti's multi-tenant, cloud-based technology platform; ISO 27001 certified. Ivanti Neurons for ITAM is also available on-premises.

Create beautiful dashboards of IT asset data.

Employ automation across your IT systems.

Achieve better outcomes for IT, your users and your entire business with integrations with other Ivanti products.

Stop firefighting and start being strategic with your IT asset management software.